Editor's Note

FinOps & Beyond, brought to you by CloudXray AI, is a weekly newsletter for practitioners tracking the forces shaping cloud, cost, and AI — and what those shifts mean for operating models, accountability, and spend decisions.

For sponsorship opportunities, click here to schedule time to talk with the team.

Table of Contents

General Tech Trends Analysis

Why Is Cost left out in the Architectural Cold?

Building for Performance, Resilience, & Security without Cost at the table

I recently read an article by Jordan Hornblow. The post walks through him building a small music API called Lush Aural Treats. Nothing exotic. Just a simple service that parses email album submissions, fetches metadata from Spotify, stores results, and notifies users.

He built on Amazon Web Services because that is what he knew, following a familiar architecture pattern: Cloudflare in front, an Application Load Balancer routing traffic, ECS Fargate tasks in a private subnet, and a NAT gateway handling outbound API calls.

He has always been cost conscious and expected this to stay inexpensive. Unfortunately, by the time he looked at the invoice after only a couple of months, it was roughly $1,000.

Why? The ALB charged whether traffic arrived or not. The NAT gateway billed by the hour and by the gigabyte. The baseline cost of the architecture, before the product did anything, was around $100 per month, and then a modest usage spike did the rest.

He tore it down and rebuilt it. Removed the NAT gateway. Simplified the routing. The next iteration gave him a near $0 baseline cost. Same workload.

The lesson, here, is not that he made a mistake. The lesson is that he made the standard choice, which optimizes for performance, resilience, and security and does not include costs as an input.

AWS reference architectures are designed for performance, resilience, and security: multi-AZ, dedicated load balancers, private network isolation. Cost is not a primary input into those designs. Those requirements are real for workloads that need them. The problem is that these patterns have become defaults as Engineers just copy and reuse them across internal tools, staging environments, side projects, and MVPs that will never see the traffic profile those architectures were built to support. The cost profile travels with the pattern, whether or not the performance and resilience requirements do.

This is what building for performance, resilience, and security looks like when cost is not part of the decision: you get both, at a price you did not plan for.

AWS S3: What You Don't See Still Costs You

Amazon Web Services S3 has been running since 2006. It now has more than 100 API actions and works exactly as documented. The documentation is thorough. The costs are real, predictable, and preventable, if you know where to look.

Most teams don't, until the bill arrives.

Versioning is the first trap. Enabling it is explicit, which creates the illusion of control. The actual storage consumed by previous object versions is invisible in the standard bucket view. Finding it requires setting up S3 Inventory and running a SQL query against the output, a step most teams skip because nothing prompts them to do it. The versions accumulate. The bill grows quietly.

Incomplete multipart uploads are worse. When an upload fails midway, from a network drop or application crash, the individual parts do not expire. They have no default TTL. They are not visible in the UI. They simply sit there, accruing storage cost for data that serves no purpose and that most teams do not know exists.

Storage classes add a third layer. S3 has no bucket-level default class. Every object lands in Standard unless the upload client explicitly requests otherwise. Lifecycle rules that transition objects to Intelligent-Tiering run on a schedule, not instantly, so there is always a billing window at the Standard rate regardless of intent. If you want consistent class behavior, you have to enforce it in a bucket policy. Most teams do not, because they assume the lifecycle rule covers it.

None of this is a bug. These are designed behaviors. S3's cost model rewards teams who understand the internals. Inattention is not punished with a warning. It is punished with a bill, and the bill looks like normal storage growth until you actually inspect what you are storing.

The pattern is the same as Jordan Hornblow's architecture: the default is not cost-neutral. The default is optimized for AWS.

Building Cost-Aware: The OpenClaw Challenge

II've been tinkering with OpenClaw for a couple of months now, like many others. For those unfamiliar or not closely following the AI space, it is a self-hosted AI gateway that connects messaging apps such as WhatsApp, Telegram, Discord, and iMessage to LLMs. You run the gateway on your own infrastructure, and it becomes the bridge between wherever you message from and an always-available AI assistant. Self-hosted and open source. The architecture gives you control over your data and infrastructure. Sounds appealing. What it does not give you is free inference.

Every message that flows through the gateway needs to be sent to a model, which acts as the brain. That model costs something. This is where the real engineering starts.

The free-tier models work. They are not the same as the best available paid models, and the quality gap is real: less precise reasoning, more hedging, more cleanup required.

So what do you do? The work the model does not handle well shifts back to you. You start to build and tune. You tighten prompts to reduce token usage. You evaluate which tasks can tolerate a smaller model and which require a stronger one. You explore different plugins. You analyze how accessing models via OAuth through Pro accounts and compare that pricing across providers versus direct API calls to determine which cost structure fits your usage pattern.

Working with OpenClaw has made cost a first-class input in real time. Not a quarterly line item, but a live constraint that shapes every configuration decision: which model for which task, whether a Pro subscription at a flat rate beats per-token pricing at your current volume, and whether a response quality tradeoff is actually worth the cost.

Why Cost Must Be Included

Most architectural reviews have two criteria: will it perform, and will it hold. Cost enters later, in the billing console, the quarterly review, or the post-mortem after a surprise invoice.

Jordan did not build a bad architecture. He built the AWS Well-Architected one, and that assumed he needed enterprise-level resilience that he did not. The S3 costs described earlier do not accumulate because someone made a wrong call. They accumulate because no one included costs in the original design and were not looking at the right metrics. In both cases, cost was downstream of the decision that produced it. By the time it showed up, the decision was already in production.

That gap is the real problem. Not the NAT gateway. Not the unconfigured lifecycle rule. The structural habit of treating cost as something you measure after you build, rather than something you design for while you are building.

Pull it upstream. Into the architecture review. Into model selection. Into configuration choices. Give cost a seat at the table as the third criterion for whether what you built is actually good.

Performance. Reliability. Security. Cost. One decision. Four criteria.

FinOps Signal

Structural Trend Quick Takeaway

Cost Discipline Is an Architecture Problem

Here is the sequence most FinOps programs run on: build the infrastructure, generate the bill, analyze the bill, find the waste, try to fix it. The feedback loop runs backward. The bill is the signal. The architecture is already in production.

That sequence has a structural flaw. By the time cost shows up in a dashboard, the decision that caused it was made weeks or months earlier, often by someone who has already moved on to the next sprint. The FinOps team inherits the outcome of architectural choices they were not part of.

This is why “better visibility” keeps hitting a ceiling. Visibility is a retrospective instrument. It tells you what happened. It does not tell you what was decided, who decided it, or whether a cheaper path was ever considered.

Two patterns are worth naming.

The first is enterprise pattern inheritance. Hyperscaler reference architectures are designed for organizations that need multi-AZ redundancy, dedicated load balancers, and private network isolation. Those requirements are real for regulated workloads, high-availability SaaS products, and production systems that genuinely need that resilience. The problem is that these patterns have become defaults. Engineers copy and reuse them across internal tooling, side projects, staging environments, and MVP workloads that will never see the traffic profile the architecture was designed to support. The cost profile travels with the pattern, whether or not the resilience requirement does.

The second is hidden object accumulation in storage. S3 is cheap until it is not. Version history accumulates silently, not in the object browser or obvious metrics, but in storage that requires an inventory query to surface. Incomplete multipart uploads do not expire. They are not visible in the UI. They simply exist, accruing cost. Storage classes default to Standard unless an upload client explicitly requests otherwise, and lifecycle transitions run on a schedule, not instantly, so the window between object creation and class optimization always incurs Standard rates.

None of these are bugs. They are designed behaviors. The S3 cost model rewards teams who understand how it works and penalizes teams who do not.

What both patterns share is that cost is embedded at the point of configuration, not at the point of operation. No amount of cost monitoring fixes a NAT gateway that should not exist. No anomaly alert catches S3 versions that were never expected to accumulate. The visibility layer is downstream of the decision layer by design.

The implication for FinOps is uncomfortable: the highest-leverage intervention is not a better dashboard. It is a seat in the architectural conversation before infrastructure is provisioned. FinOps practitioners who can engage with engineering on design choices, not just analyze billing outputs, have a structurally different impact.

This is the practitioner gap the discipline has not fully named. The Framework has elevated FinOps toward executive alignment. What it has not solved is the gap between the billing layer and the build layer.

Cost-aware architecture is not a tool category. It is a practice. The question for any FinOps team is whether they have influence at the point where cost decisions are made, or whether they are producing reports about decisions that are already three sprints old.

Signal: Cost discipline is not achieved through better visibility, it is determined at the moment architectural decisions are made.

FinOps Industry

News or Market Updates

The Flexera 2026 State of the Cloud Report: Cost Is Moving to the Front of the Line

Flexera surveys cloud decision-makers every year. The fifteenth edition has one finding that deserves more attention.

The challenge of optimizing costs after migration, long a fixture in the top three, has fallen to fifth place. It now ranks behind assessing on-premises versus cloud costs upfront and selecting the right instance before deployment.

Flexera's interpretation is direct: more organizations are adopting a shift-left approach with FinOps, considering costs earlier in the architectural planning phase, before deployment.

This aligns with the argument throughout this issue. Jordan Hornblow's NAT gateway was not a post-production optimization problem. It was an architectural selection that showed up in the bill later. S3 costs accumulate because storage behavior is not modeled before enabling versioning. In both cases, cost is determined at the moment of configuration, not discovered in the dashboard.

The Flexera data suggests practitioners are starting to recognize this before migration, not after. That is behavioral evidence, not aspiration. It shows up in how teams rank their own challenges.

Two numbers reinforce why timing matters. Wasted cloud spend increased to 29% this year, reversing a five-year downward trend, driven by the cost complexity of AI workloads and new PaaS services. As teams improved at managing infrastructure they understood, a new spend category reset the learning curve. At the same time, organizations tracking unit economics increased nine percentage points year over year. Unit metrics, such as cost per inference or cost per transaction, connect architectural decisions to business outcomes. You cannot track them if cost was not part of the design.

The shift is real. Making it structural is the work that remains.

FinOps Company Spotlight

If you would like your company included in the Spotlight, contact the CloudXray AI Team

Category: Managed Services & Consulting

What They Do: FinOps Consulting & Advisory Services; Owners & maintainers of the single largest FinOps company directory (finops.cloudxray.ai)

Why It Matters: Companies still need guidance on implementing FinOps and understanding the landscape of companies that exist

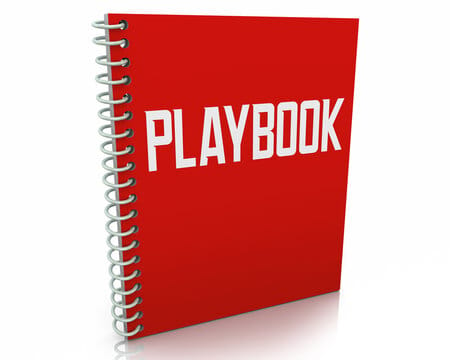

Operator Playbook

Five S3 Defaults That Cost You More Than They Should

S3 is cheap until it isn't. Here are five specific behaviors that generate cost without obvious signals — and what to do about each.

Non-current versions accumulate forever.

When versioning is enabled, S3 retains every previous object version until you explicitly expire them. These versions are not visible in the default bucket view. They are included in storage metrics but not obvious without deeper inspection. Add a lifecycle rule to expire noncurrent versions after a retention window aligned to your recovery requirements. For most teams using versioning as a safeguard, 7–30 days is sufficient.Incomplete multipart uploads don't expire.

If an upload is initiated and fails — network issue, application crash — the individual parts remain indefinitely. They are not visible in the UI. While they can be listed via API or CLI, that is impractical at scale. The fix is a lifecycle rule usingAbortIncompleteMultipartUploadwith a days-after-initiation threshold. Seven days is a reasonable default for most workloads.Storage classes are set by the upload client, not the bucket.

There is no bucket-level default storage class. Objects land in Standard unless the client explicitly specifies otherwise. Lifecycle transitions to Intelligent-Tiering or other classes run asynchronously, not immediately, so there is always a window where objects are billed at Standard rates. If you want consistent class behavior, you can enforce it via a bucket policy that requires a specific storage class on upload. This is a meaningful change to communicate to upstream teams before implementing.Lifecycle rules run on a schedule.

Even when correctly configured, lifecycle rules do not execute instantly. Transitions and expirations occur on S3’s internal schedule. The gap between object creation and the first eligible transition is billed at the higher rate. Factor this into cost modeling, especially at scale.Inventory is not automatic.

Understanding what versions and storage classes exist in a bucket often requires enabling S3 Inventory and analyzing the output. This is not enabled by default. While other tools like S3 Storage Lens provide visibility, detailed analysis typically requires inventory-level data. Enable inventory for versioned buckets and run periodic queries to detect accumulation before it compounds.

None of these are exotic configurations. They reflect the gap between how S3 behaves by default and how most teams assume it behaves.