Editor's Note

FinOps & Beyond, brought to you by CloudXray AI, is a weekly newsletter for practitioners tracking the forces shaping cloud, cost, and AI — and what those shifts mean for operating models, accountability, and spend decisions.

Table of Contents

General Tech Trends Analysis

AI Efficiency, Layoffs, and the Unit Economics Beneath the Surface

Tech layoffs are back in the headlines, but the explanation has changed.

Increasingly, companies say the reason is AI efficiency — smaller teams, faster development cycles, and automated workflows. Organizations are describing themselves as becoming “AI-native,” suggesting that intelligent systems allow them to operate with fewer people while maintaining productivity.

But beneath the headlines, something more structural may be happening.

The shift is not only about layoffs. It is about where technology companies are spending money.

Labor growth is flattening.

Infrastructure investment is accelerating.

For practitioners responsible for cloud platforms, SaaS environments, and AI workloads, the question is not simply whether AI improves productivity.

The more important question is whether it improves unit economics.

Organizations Are Reallocating Cost

Recent announcements from companies like Block, Amazon, and Oracle illustrate something important about the current moment in technology.

Organizations are not simply reducing cost.

They are reallocating cost.

Some of that cost is being removed from payroll. But a growing share is being redirected toward infrastructure — particularly the infrastructure required to train and run AI systems.

Understanding that shift requires looking at how several companies are navigating the transition.

Block: AI Efficiency Meets Workforce Reduction

Earlier this year, fintech company Block, led by Jack Dorsey, announced plans to cut over 4,000 jobs — nearly half of its workforce — as part of an effort to integrate artificial intelligence more deeply into its operations.

Dorsey framed the move as a shift toward AI-enabled productivity, arguing that new tools would allow smaller teams to perform the same work more efficiently. As a result, investors reacted positively in the near term.

However, analysts and former employees questioned whether AI alone explained the layoffs. Block’s workforce expanded significantly during the pandemic era, growing from roughly 4,000 employees in 2019 to nearly 13,000 by 2023, which some critics say created organizational inefficiencies.

In that context, layoffs attributed to AI may also reflect a broader pattern: companies correcting pandemic-era hiring while repositioning for the next phase of technology investment.

The AWS Example: Infrastructure Becomes the Core Asset

If Block illustrates the software-company side of the shift, Amazon illustrates the infrastructure side.

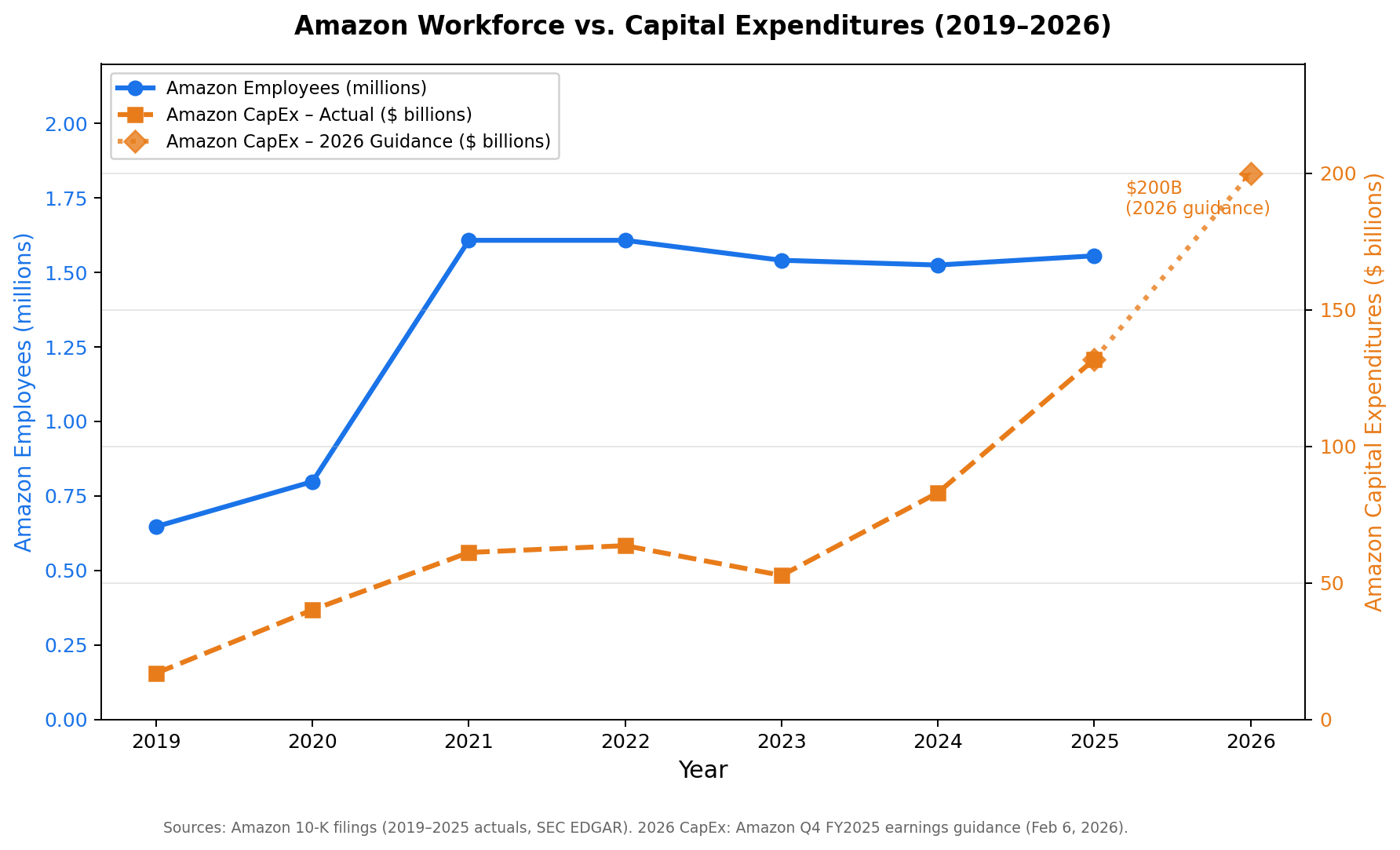

The company has dramatically increased capital expenditures as demand for cloud and AI computing accelerates. Amazon reported $89.9 billion in capital expenditures through the first three quarters of 2025 alone, much of it tied to AI infrastructure.

Looking forward, Amazon outlined plans for as much as $200 billion in capital outlays to expand data centers and AI infrastructure — one of the largest technology investment programs in the industry.

At the same time, Amazon has reduced parts of its corporate workforce. The company confirmed around 16,000 job cuts globally as part of restructuring aimed at improving efficiency and reducing bureaucracy.

Executives have also indicated that increasing use of AI tools could reduce the need for some roles over time, particularly those involving repetitive tasks.

Taken together, these moves illustrate a deeper pattern.

They are reallocating cost — shifting resources from labor toward infrastructure.

Chart: Amazon Workforce vs Infrastructure Investment

Amazon’s workforce expanded rapidly during the pandemic hiring surge before stabilizing. At the same time, capital investment — increasingly tied to AI infrastructure and cloud capacity — has continued to grow sharply.

Oracle and the Cost of AI Infrastructure

(Source - Reuters)

The same pattern is visible at Oracle, which has been expanding its data center footprint while restructuring parts of its workforce.

According to reporting from Reuters, the layoffs are being driven in part by the rising cost of building large-scale AI infrastructure to serve major clients and partnerships. As a result, Oracle is reportedly planning to raise tens of billions of dollars to fund these expansions while simultaneously reducing staff to free up capital.

Funding that infrastructure requires tradeoffs. Workforce reductions can free capital that can then be redirected toward long-term infrastructure investments.

AI Changes the Cost Structure

When companies reduce headcount while increasing AI adoption, costs do not disappear.

They move.

Work that once required human labor increasingly becomes automated through software systems. Customer support interactions can be handled by AI agents. Analysts can rely on automated data pipelines. Engineers can build internal tools more quickly using AI-assisted development environments.

The economic result is subtle but significant.

What was previously a fixed labor cost becomes a variable infrastructure cost.

Instead of salaries, organizations increasingly pay for compute resources, model inference, orchestration platforms, data pipelines, and AI services.

Those costs scale with usage.

Without careful measurement, they can grow rapidly.

This is exactly the environment where FinOps becomes essential.

Why This Matters for FinOps

In SaaS and cloud-native companies, gross margin is heavily influenced by infrastructure.

Compute, storage, networking, and data processing pipelines all sit inside the cost of delivering the product.

AI introduces a new and rapidly expanding category of infrastructure cost: inference.

Every time a user interacts with an AI feature — submitting a prompt, retrieving an embedding, or triggering an automated workflow — compute resources are consumed.

For organizations building AI-enabled products, the central operational question becomes:

Does AI reduce the cost of delivering value, or increase it?

Answering that question requires understanding unit economics.

Practitioners need visibility into metrics such as:

cost per inference request

infrastructure cost per feature

cost per AI interaction

cost per transaction

These measurements allow teams to determine whether automation is improving the economics of the business or quietly eroding margins.

The Industry Signal

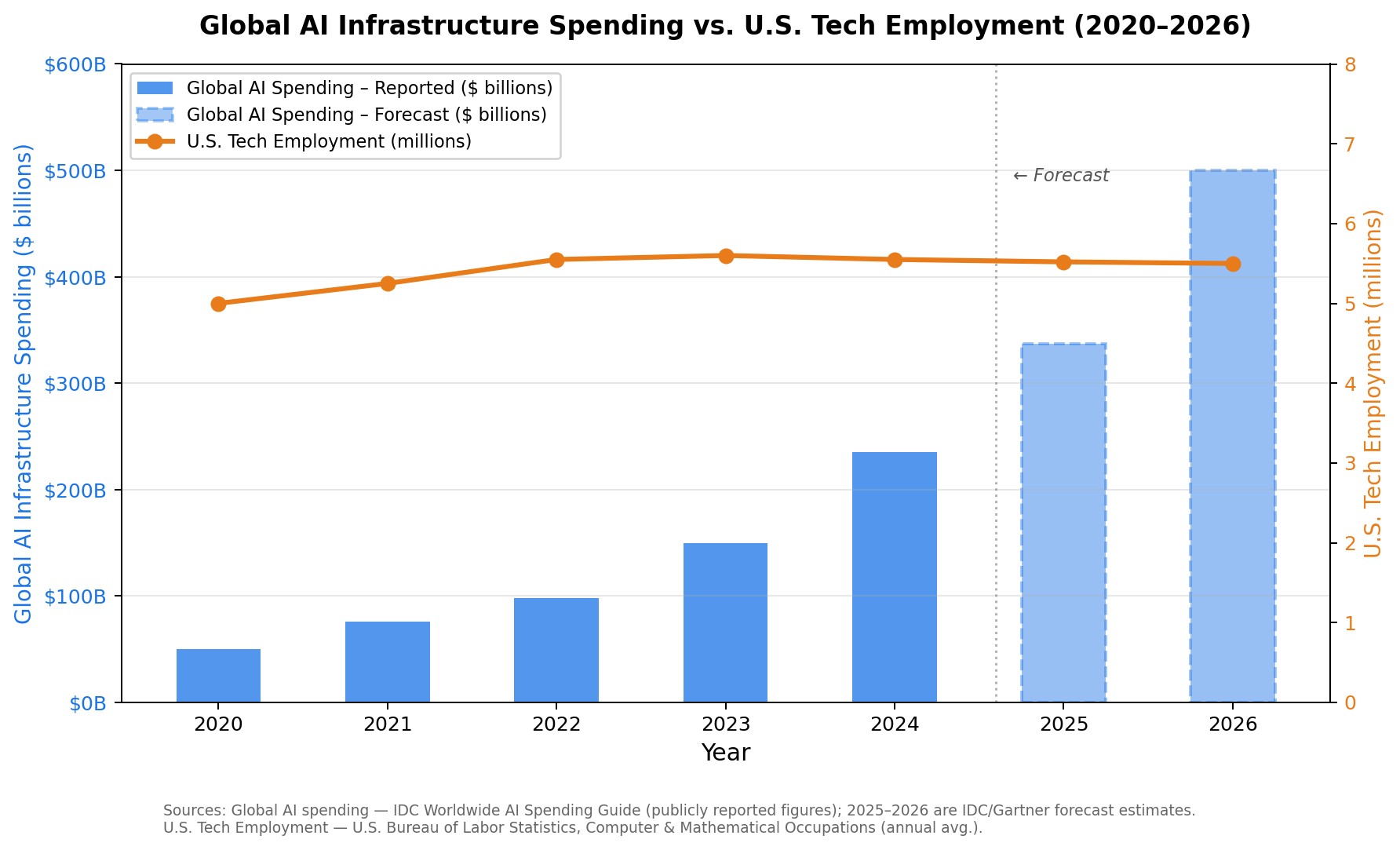

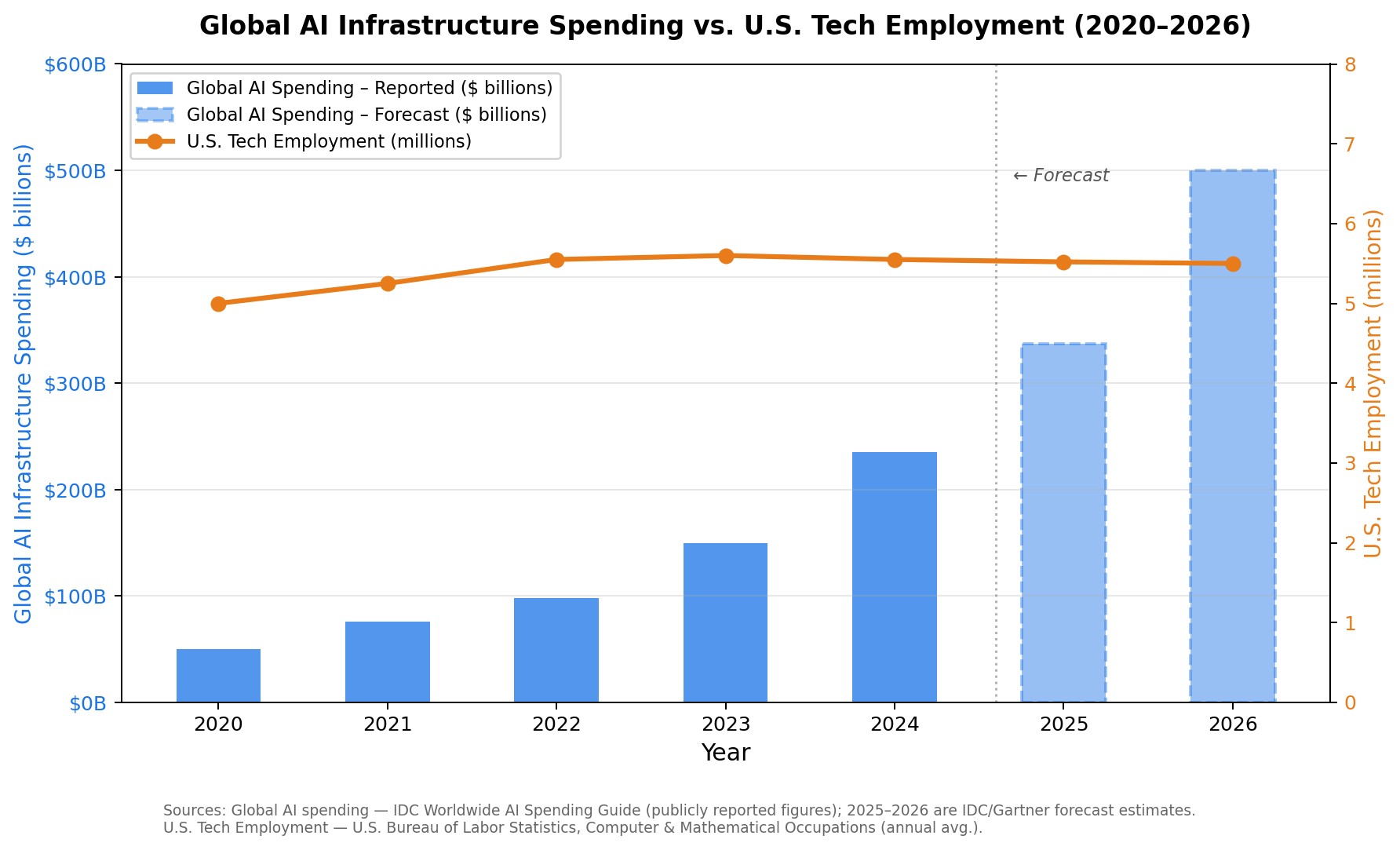

The divergence between infrastructure investment and hiring becomes clearer when viewed across the broader industry.

Global technology companies are investing heavily in AI infrastructure while hiring growth slows following the pandemic hiring surge.

At the same time, AI is increasingly cited as a factor in corporate restructuring. In 2025 alone, automation and AI were associated with nearly 50,000 announced U.S. job cuts, according to labor market data compiled by Challenger, Gray & Christmas.

Chart: AI Infrastructure Investment vs Tech Employment

The Signal Beneath the Headlines

Artificial intelligence will reshape how software companies operate.

Automation will reduce certain forms of labor and accelerate development cycles across engineering, product, and operations.

But AI does not eliminate economic discipline.

Some layoffs may reflect genuine productivity gains. Others reflect slower growth, margin pressure, or shifts in capital allocation.

What recent moves from companies like Block, Amazon, and Oracle suggest is something deeper.

Technology companies are moving toward operating models where infrastructure becomes the dominant driver of cost.

In that environment, understanding the economics of cloud, SaaS, and AI systems becomes central to running a business.

That visibility — into cost, efficiency, and unit economics — is exactly where FinOps lives.

FinOps Signal

Structural Trend Quick Takeaway

AI Investment vs Tech Hiring

What the chart shows

Global AI spending is rising rapidly while tech hiring has flattened and beginning to decrease following the pandemic hiring surge.

Why it matters

As companies scale AI infrastructure, compute increasingly becomes the dominant cost driver in software businesses.

FinOps takeaway

Organizations must begin tracking metrics such as:

cost per inference

infrastructure cost per feature

AI workload utilization

These determine whether AI adoption improves or erodes margins.

FinOps Industry

News or Market Updates

State of FinOps 2026 — Signals

The latest State of FinOps data suggests the discipline is expanding well beyond its original cloud-cost roots. Three themes stand out. FinOps is broadening into technology spend governance, with practitioners increasingly managing SaaS licensing, private cloud, and AI alongside public cloud infrastructure. AI cost management has rapidly become a central concern, with most organizations now tracking or preparing to track AI-related spending. And FinOps appears to be moving higher in the organization, with more teams reporting into CTO/CIO leadership and participating earlier in technology decisions. This is all great to see.

What's interesting is what the survey data points toward, even if it doesn't address directly. Respondents are clearly aware of AI spend as a category — but the conversation around AI unit economics, metrics like cost per inference or cost per AI feature, appears to still be in early stages across the industry. Similarly, the broader shift from labor-driven scaling to compute-driven scaling is a dynamic that may not yet be fully visible to practitioners in the middle of it. And while the data shows FinOps moving closer to CTO/CIO leadership, the question of who ultimately owns the mandate to drive cost changes — not just report on them — remains an open one. In many organizations, FinOps still functions as an advisory layer rather than an execution authority, producing insights but lacking clear accountability for implementation. The discipline still lacks a widely adopted set of standardized cost efficiency concepts — analogous to SLAs or SLOs — that would create measurable ownership and operational accountability for technology spend.

That tension — and what real accountability for technology spend might look like — will be the focus of next week's issue.

FinOps Company Spotlight

Featured from the FinOps Directory, built and maintained by CloudXray AI.

Company: Cloudforecast

Category: Cloud Cost Visibility & Reporting

What They Do: CloudForecast is a cloud cost visibility platform designed specifically for engineering-led organizations that lack dedicated FinOps resources.

Why It Matters: Increase Engineering speed and clarity: enabling teams to understand cloud cost changes in seconds rather than hours.

Operator Playbook

Suggestion: Start Measuring AI Interaction Cost

As AI features move into production, one simple metric can provide an early signal of how those systems affect your economics: cost per AI interaction.

Start with a simple calculation:

Total AI infrastructure cost / total AI interactions

For AI Infrastructure, Include costs such as model tokens or API usage, inference compute, vector databases, and supporting storage or orchestration for infra costs.

An AI interaction typically represents one completed request from a user — for example a prompt-and-response cycle in a chat interface, a generated summary, or an automated workflow triggered by an AI model. Determine what works best for your business.

If you are spending $20,000/month aggregated over those cost while logging 200,000 interactions as defined by your business, the baseline unit cost is $0.10 per interaction.

The goal isn’t perfect precision. It’s to establish a baseline metric that engineering and product teams can observe as usage grows.

Over time, this measurement helps answer a critical question:

Is AI improving the economics of the product, or quietly increasing the cost of delivering it?